Goodbye Scribbles, Hello Logic: Why the “Nano Banana” Gemini Image Model Is the First AI Tool I Actually Trust

The Big Shift: From Dreamy Art Toy to Logical Powerhouse

For years, generative AI felt like handing a toddler a box of expensive crayons. The results—Midjourney’s painterly landscapes, DALL-E’s quirky doodles—were beautiful, but often functionally useless. Need text on an image? You got alien glyphs. Need a specific character consistency? Roll the dice.

That all changed in late 2025 with the arrival of the model Google officially calls Gemini 2.5 Flash Image and Gemini 3 Pro Image. But we know it by its leaked, much funnier internal name: The Nano Banana. This wasn’t just another incremental update; it was a cognitive shift. Nano Banana is the first visual AI that actually seems to think before it starts drawing.

The core difference? Gemini 3 Pro integrates a full reasoning engine derived from Google’s Large Language Model (LLM). It can plan compositions, verify facts against real-time search, and keep track of complex scenes. This is the difference between a model that “draws what you say” and one that “understands what you mean.”

Capability 1: The Ramen Car Test and Search Grounding

If you’re wondering how we tested its “reasoning,” look no further than the infamous “Ramen Car” Test.

When you asked older models (like Midjourney v6) to create “a car made of ramen noodles,” they’d just slap a noodle texture onto a metal car body. Nano Banana Pro, however, reasoned that the components should structurally match the food. The result? A vehicle with nori (seaweed) wheels and a boiled egg windshield. This isn’t just clever; it shows the model applies semantic logic (round food = wheel) instead of simple texture mapping.

This logical backbone is paired with its Search Grounding capability. This is the single most important feature for enterprise users. Tired of AI making up data? Nano Banana Pro executes a live Google Search query to verify facts before synthesis. Ask it to generate a chart based on “last week’s stock performance of Tech Corp,” and it retrieves the real data, preventing visual hallucinations. It’s an AI Technical Illustrator that comes with a built-in fact-checker.

Usability 1: The Adobe Integration and Conversational Editing

Technical wizardry is useless if the tool doesn’t fit your workflow. This is where the Adobe Partnership changes the game. Google didn’t try to lock this masterpiece in a Google-branded digital cage; they put it right inside the creative fortress.

Nano Banana Pro is a partner model available within Adobe Firefly, Photoshop, and Illustrator.

- Zero-Shot Editing: The Gemini 2.5 Flash model (the faster, cheaper sibling) excels at zero-shot image editing. You upload a photo and conversationally prompt changes—”add a pair of sunglasses and make the sky purple”—without needing manual masking or inpainting tools. It infers the mask from the command, saving professional creatives mountains of time.

- Visual Consistency: Storytellers rejoice! The old “character consistency” nightmare is over. Nano Banana Pro’s 14-Image Reference Stack is its superpower. You can input multiple shots of a character, a specific product, and a background style, and the model synthesizes a new image that retains all those references. This means you can finally generate a comic panel where the protagonist doesn’t magically change hair color between frames.

Testing & The Honest Truth (The Banana Peel Flaw)

For all its revolutionary logic, Nano Banana isn’t flawless. Being an honest review, we have to talk about the “Refusal to Edit” loop.

In about 50% of subtle conversational edits (“make the light slightly warmer”), the model will sometimes return the original image unchanged. When prompted again, the LLM backend often apologizes but fails to correct the error, leading to a frustrating loop of passivity. It’s as if the model thinks the change is unnecessary or too close to a policy boundary, and simply returns a null operation.

Furthermore, while vastly improved, the classic “hands problem”—extra fingers or malformed limbs—still persists, especially in complex compositions. The textures on large, solid surfaces (concrete, plain walls) can also sometimes exhibit low-quality noise patterns, reminding you that the image is, in fact, AI-generated.

Final Verdict: Should You Peel the Nano Banana?

Absolutely. The Nano Banana ecosystem represents the maturation of generative AI.

The fast, cheap Flash variant is a daily productivity hack for quick edits and rapid prototyping. The powerful Pro variant, with its Thinking Mode and Grounding, is the enterprise solution we’ve been waiting for. While the price point is higher ($0.24 per 4K image), the near-certainty of getting a logically correct, factually grounded, and compositionally planned asset easily justifies the cost over the hours spent debugging a hallucinating model.

Nano Banana is not an artistic replacement; it’s a technical illustrator and a creative assistant that finally adheres to the laws of logic. It has shifted AI from a fun, chaotic dream engine to a necessary, dependable tool.

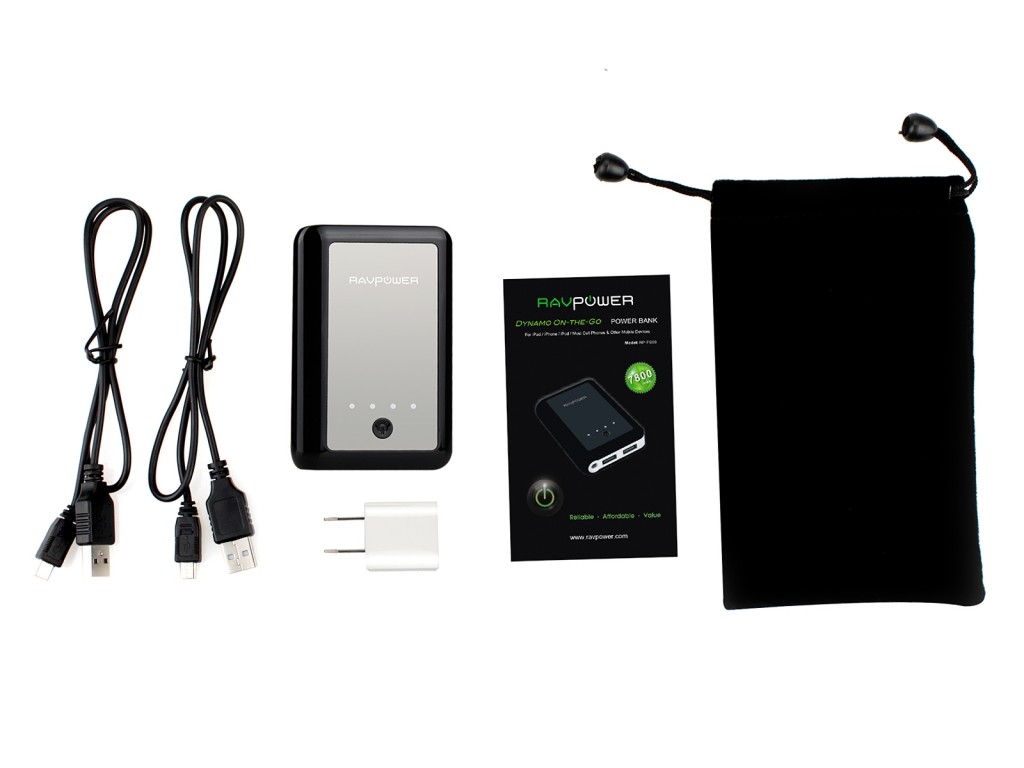

All I did was take the first image, add it and asked Gemini to add image characters to defend the city against Pikachu