In the rapidly evolving landscape of artificial intelligence (AI) and deep learning, access to robust computing power is paramount. While cloud-based GPU solutions offer undeniable flexibility, a growing number of AI professionals, researchers, and startups are discovering the profound benefits of investing in their own local GPU servers. This shift isn’t just about preference; it’s about unlocking a powerful, private, and predictable environment that can truly accelerate the pace of innovation.

Owning a local GPU server for deep learning and AI model training presents a compelling set of advantages that directly address many of the challenges faced when relying solely on external resources.

Long-Term Cost-Effectiveness: A Smart Investment

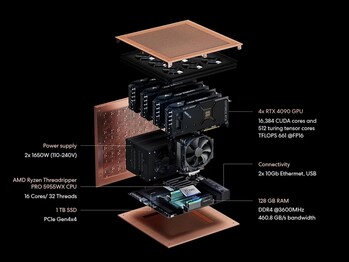

At first glance, the upfront cost of a dedicated GPU server might seem substantial, especially when compared to the pay-as-you-go model of cloud services. However, for sustained and intensive AI workloads, this initial investment quickly transforms into significant long-term savings. Unlike cloud GPUs, where every minute of usage, including idle time or unexpected interruptions, incurs charges, owning your hardware means your operational costs are dramatically reduced over time. Consider the example of Autonomous Inc.’s Brainy workstation: users can save thousands of dollars within just a few months compared to continuous cloud rentals, making it a financially astute decision for ongoing projects.

Enhanced Data Privacy and Security: Keeping Your Innovations Safe and Confidential

In an era where data breaches, intellectual property theft, and stringent regulatory compliance (like GDPR or HIPAA) are paramount concerns, the security and privacy advantages of a local GPU server are absolutely critical. This is perhaps one of the most compelling reasons for organizations and individuals dealing with sensitive information or proprietary algorithms to choose an on-premise solution.

- Unrivaled Local Control: Your sensitive data, proprietary AI models, and confidential research remain entirely within your physical control. They reside securely within your own infrastructure, behind your own firewalls and security protocols. This dramatically reduces the inherent risks of data breaches, unauthorized access, or compliance issues that can arise when data is stored and processed on third-party cloud servers, where you have less direct oversight.

- Minimized Exposure to External Threats: By keeping your data and computations local, you significantly reduce the need for constant data movement between your environment and external cloud providers. Fewer data transfers inherently mean fewer points of vulnerability and a smaller attack surface, strengthening your overall security posture against external threats. This direct control ensures your most valuable assets are always under your watchful eye.

Unparalleled Performance and Responsiveness: Unleashing True AI Power

One of the most immediate and impactful benefits of a local GPU server is the sheer performance and responsiveness it offers. When your computing power is on-premise, you experience:

- No Queuing: The frustration of waiting in line for available cloud resources becomes a thing of the past. You have immediate, dedicated access to your computing power precisely when you need it.

- Zero Internet Lag: All computations occur locally, eliminating any latency or slowdowns that can plague internet-dependent cloud connections. This is particularly critical for iterative prototyping, fine-tuning, and real-time inference where every millisecond directly impacts development speed.

- Consistent Power: Your AI models run without the threat of interruption from network fluctuations or contention with other users on shared cloud infrastructure. This translates to pure, uninterrupted AI processing power, allowing your training runs to complete efficiently and reliably.

Maximum Flexibility and Customization: Tailoring Your AI Environment

A local server grants you an unparalleled degree of control over your computing environment:

- Hardware Control: You have the freedom to select and configure the exact hardware components—from the number and type of GPUs to RAM, storage, and CPU—that perfectly align with your specific deep learning tasks and budget. This allows for highly specialized setups optimized for your unique needs.

- Software Environment: You can meticulously set up and customize your entire software stack, including the operating system, drivers, AI frameworks (like TensorFlow or PyTorch), and libraries. This freedom from cloud provider limitations or pre-configured images enables deep optimization for unique and cutting-edge workflows.

Reliability and Predictable Operations: Peace of Mind for Critical Projects

For critical AI workloads, predictability is key, and a local server delivers just that:

- No Spot Instance Shutdowns: Cloud “spot instances,” while often cheaper, come with the risk of unexpected shutdowns by the provider. A local server guarantees continuous operation for your crucial training runs, preventing lost progress and wasted time.

- Full Control Over Maintenance: You dictate when and how to perform system maintenance or updates, ensuring that your vital AI workloads are never interrupted by unforeseen actions from a third-party provider.

Hands-On Learning and Experimentation: Deepening Your Expertise

For those looking to truly master the intricacies of AI development, a local server offers an invaluable educational experience:

- Deeper Understanding: Owning and managing your hardware provides a hands-on opportunity to learn about system administration, hardware optimization, and the fundamental workings of AI workflows.

- Unrestricted Experimentation: You can freely experiment with different hardware configurations, driver versions, and software stacks without incurring additional costs or worrying about impacting a shared environment. This fosters a deeper understanding and encourages innovative problem-solving.

“We’re seeing innovative companies recognize the need and engineer solutions specifically to address the cloud’s limitations for many businesses,” says Mr. Dhiraj Patra, a Software Architect and certified AI ML Engineer for Cloud applications. “The ability to have dedicated, powerful GPU workstations on-site, like the Brainy workstation with its NVIDIA RTX 4090s, provides that potent combination of performance, cost-effectiveness, and data security that is often the sweet spot for SMBs looking to seriously leverage AI and GenAI without breaking the bank or compromising on data governance.”

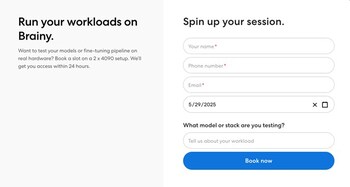

Experience Brainy Firsthand: The Test Model Program

To give developers, researchers, and AI builders a chance to experience the power of Brainy before committing, Autonomous Inc. has just announced that their sample of Brainy, the supercomputer equipped with dual NVIDIA RTX 4090 GPUs are now open for testing, giving a fantastic opportunity to see firsthand how your models perform on this supercomputer.

How the Test Model Works:

Brainy functions as a high-performance desktop-class system, designed for serious AI workloads like hosting, training, and fine-tuning models. It can be accessed locally or remotely, depending on your setup. Think of it as your own dedicated AI workstation: powerful enough for enterprise-grade inference and training tasks, yet flexible enough for individual developers and small teams to use without the complexities of cloud infrastructure.

Simply by clicking the “Try Now” button and filling a form on Autonomous’ website, the testing will be ready within a day. This hardware trial program allows participants to book a 22-hour slot to run their inference tasks on these powerful GPUs. Whether you’re building AI agents, running multimodal models, or experimenting with cutting-edge architectures, this program lets you validate performance on your own terms—with no guesswork. It’s a simple promise: use it like it’s yours—then decide.

In conclusion, a local GPU server like Autonomous Inc.’s Brainy is more than just powerful hardware; it’s a strategic investment in autonomy, efficiency, and security. By providing a private, predictable, and highly customizable environment, it empowers AI professionals to iterate faster, safeguard sensitive data, and ultimately accelerate their journey in the exciting world of deep learning and AI innovation.

Availability

Brainy is available for order, making enterprise-grade AI performance accessible to startups and innovators For detailed specifications, configurations, and pricing, please visit https://www.autonomous.ai/robots/brainy.

About Autonomous Inc.

Autonomous Inc. designs and engineers the future of work, empowering individuals who refuse to settle and relentlessly pursue innovation. By continually exploring and integrating advanced technologies, the company’s goal is to create an ultimate smart office, including 3D-printed ergonomic chairs, configurable smart desks, and solar-powered work pods, as well as enabling businesses to create the future they envision with a smart workforce using robots and AI.

Leave a Reply